AI Plant Disease Detector

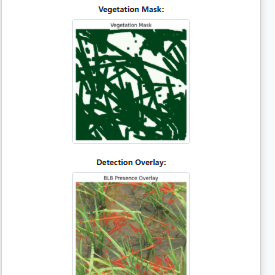

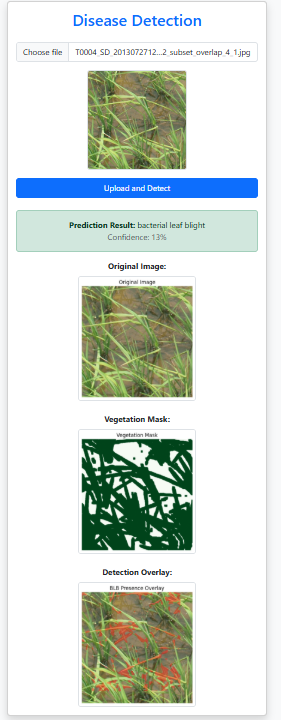

Developed using Machine Learning (DeepLab V3) with a two-stage pipeline. The model is deployed using FastAPI and Python, performs segmentation and classification of bacterial leaf blight in rice crops. The frontend uses React allowing users to upload leaf images and receive highlighted diseased areas.

Table of Contents

The goal with this project was to develop ML skills and ability to deploy a ML model with FastAPI

Training the model(s)

After some research I found a number of publicly available datasets for Rice including segmentation such as RiceSeg. I also looked at various diseases of various organisms that could be detected by RGB images and Bacterial Leaf Blight looked the most promising in terms of data availability.

Multiple research papers suggested a 2 model approach with segmentation then disease detection worked effectively on for rice disease detection. I first trained a segmentation model on RiceSeg and flattened the classes to background and vegetation due to problems with distinguishing the other classes (Poor IoUs and precision). For the purposes of this task, it was very important to distinguish background which was often similar visually to BLB disease lesions.

I then trained a model on the segmented image using a dataset from Kaggle.

Specifically DeepLabV3 was used to provide lightweight edge inference (no expensive GPU required)

For the full dataset, code and checkpoint files for the models you can contact me at usman.shabir1@outlook.com

Below is some of the code I used to train the BLB segmentation model for

all_files = [os.path.basename(f) for f in glob.glob(os.path.join(IMG_DIR, '*.png'))]

random.seed(42)

random.shuffle(all_files)

split_ratio = 0.8

split_idx = int(len(all_files) * split_ratio)

train_files = all_files[]

test_files = all_files[split_idx:]

transform_img = T.Compose([

T.ToTensor(),

T.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]),

])

transform_mask = T.Compose([

T.ToTensor(),

])

train_dataset = BLBDataset(train_files, IMG_DIR, MASK_DIR, transform_img, transform_mask)

test_dataset = BLBDataset(test_files, IMG_DIR, MASK_DIR, transform_img, transform_mask)

train_loader = DataLoader(train_dataset, batch_size=8, shuffle=True, num_workers=4)

test_loader = DataLoader(test_dataset, batch_size=8, shuffle=False, num_workers=4)

model = smp.DeepLabV3Plus(encoder_name='resnet34', encoder_weights='imagenet', in_channels=3, classes=1)

model.to(DEVICE)Model Inference Interface

This was built using FastAPI and React. FastAPI was new for me but I found it quite easy to work with as its quite similar to Django and Flask which I have worked a lot with. I read the documentation and went from there.

React was used to handle asynchronous image uploads to the FastAPI backend.

Key Learnings & Takeaways

I learnt how to build and deploy a segmentation machine learning model and reinforced key understanding of Python and React whilst applying transferrable skills from Django and Flask to FastAPI.